Quantum computers can extract features from data that make classical machine learning models more accurate. We have demonstrated this across various fields, including molecular toxicity prediction, medical image classification, and satellite remote sensing, utilizing IBM quantum processors with up to 156 qubits [1], [2]. The gains are consistent and reproducible: 2-3% absolute accuracy improvements on satellite imagery, ~5% accuracy improvement on breast tumor detection, and ~10% accuracy improvement on molecular toxicity classification, all on top of strong classical baselines. But quantum feature extraction has a deployment problem: the quantum processor must process every sample, including at inference time, making it impractical for production systems. In this technical post, we introduce classical surrogates: classical models trained to replicate the quantum feature mapping. We show that it preserves the full quantum-enhanced performance while making the deployment pipeline entirely classical. The quantum computer running at quantum-advantage level is essential, but only for training, that is to generate the data that the surrogate learns from.

Our classical surrogate makes quantum enhancement scalable and efficient for industrial production.

Quantum Features Work. We Proved it

Our Digitized Quantum Feature Extraction (DQFE) technology [1], [2] uses quantum processors to transform classical data into richer feature representations. By leveraging the physics of quantum systems, DQFE captures complex patterns and correlations in the data that standard classical methods miss. When these quantum-derived features are fed to standard classifiers, performance improves. DQFE is applicable to any tabular data classification problem. Whenever data can be represented as a feature vector, it can be encoded into a quantum circuit and processed through the DQFE pipeline.

We have validated this on three real-world problems spanning different domains and data types:

- Molecular toxicity classification [1]: 156 molecular descriptors processed on IBM Kingston (156 qubits). Quantum features delivered a ~10% improvement in classification accuracy over the classical baseline.

- Breast tumor detection [1]: Ultrasound images from the MedMNIST benchmark, processed through classical feature extraction followed by DQFE. Quantum features delivered a ~5% improvement in classification accuracy over the classical baseline.

- Satellite image classification [2]: Multi-sensor remote-sensing data from the TreeSatAI benchmark, processed on IBM quantum backends. Quantum features delivered 2-3% absolute accuracy improvement.

The problem: You can't deploy a Quantum Computer

Here is the tension. If a classifier is trained on quantum features, then every sample it will ever classify must also be quantum-processed, not just during training, but at inference time. Every new molecule to screen, every medical image to diagnose, every satellite image to classify would need to be queued, encoded, executed, and measured on a quantum processor.

For production systems, this is a showstopper. Industrial deployment demands millisecond latency, 24/7 availability, and scalability to millions of samples. Current quantum processors cannot meet any of these requirements and it may take a while to make it possible along next years.

The result is a paradox: quantum feature extraction improves model performance at the quantum-advantage level, but it is difficult to deploy with current commercial quantum hardware capabilities.

Our Solution: Classical Surrogates Trained on Quantum Data

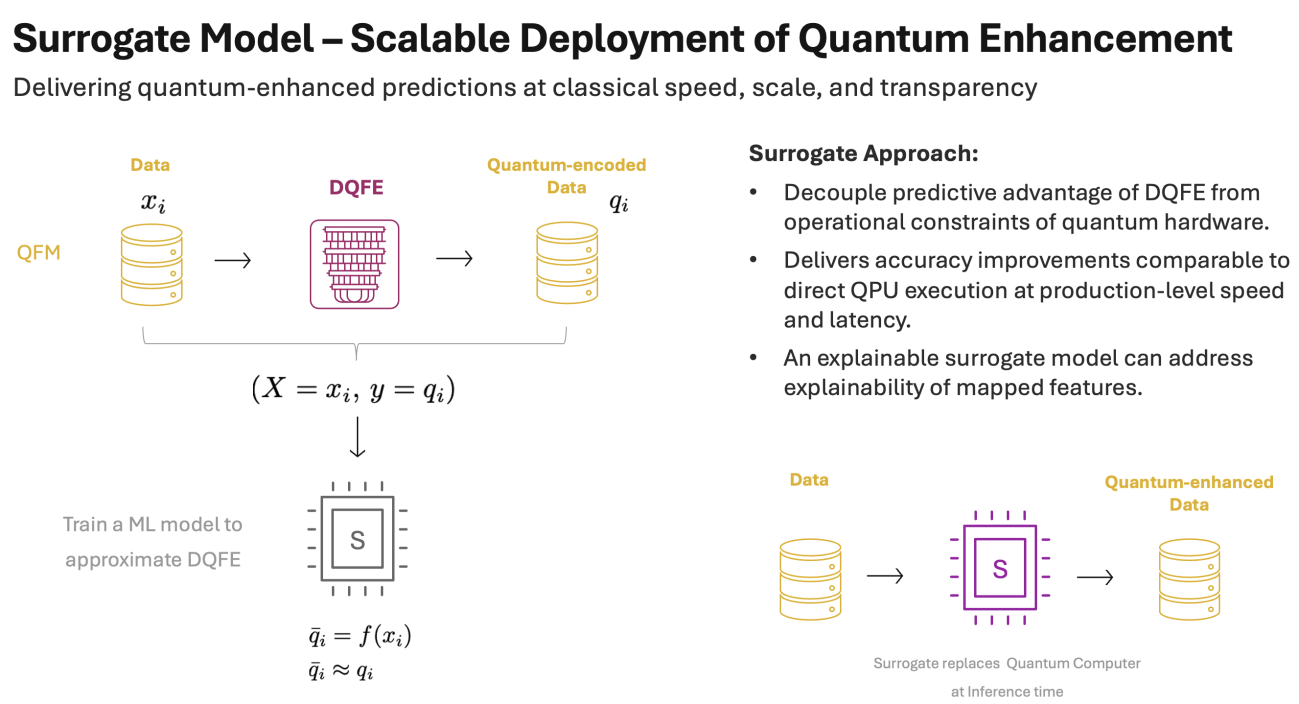

We resolve this conundrum by training a classical model to replicate the quantum feature mapping, then deploying the classical model in place of the quantum processor, bringing quantum enhancement into production level.

The key insight is that the quantum mapping, despite relying on inherently noisy quantum measurements, produces features that are stable and consistent. This has been demonstrated by the reproducible accuracy gains across different hardware backends [1], [2]. This means the mapping carries a learnable signal. We can run the quantum processor on a manageable dataset, collect the input-output pairs (classical features in, quantum features out), and train a classical surrogate to learn that relationship.

The surrogate concept is model agnostic: any regression architecture can serve as the surrogate, chosen based on the complexity of the mapping and deployment needs. Once trained, the surrogate replaces the quantum processor entirely at inference time.

Fig. 1. The classical surrogate pipeline. Left: the surrogate model is trained on input-output pairs (xi, qi) produced by a quantum computer. Classical features go in, quantum features come out, and the surrogate learns this mapping. Right: the trained surrogate replaces the quantum computer at inference time, producing equivalent quantum-like features from classical inputs alone.

Offline Quantum Advantage

The ultimate test: does the surrogate preserve the quantum advantage? We applied it to the satellite image classification task, training a Ridge regressor surrogate on quantum features generated by DQFE on IBM hardware, then evaluating on the same test set. The result speaks for itself.

| Approach | Features / Qubits | Accuracy (%) |

|---|---|---|

| Classical Baseline | 120 | 84 |

| Quantum (DQFE on IBM hardware) | 120 | 86 to 87 |

| Classical Surrogate of DQFE | 120 | 87 |

The surrogate preserves the quantum-enhanced performance while transforming the operational picture:

| QPU at inference | Surrogate at inference | |

|---|---|---|

| Latency | Minutes (queue + execution) | Microseconds |

| Scalability | Limited by hardware availability | Millions of samples |

| Availability | Shared access, maintenance windows | 24/7, any classical server |

| Cost | Per-shot, scales with data volume | Near-zero marginal cost |

| Explainability | Irreversible mapping, opaque | Depends on model choice (e.g. linear models are fully interpretable) |

The quantum computer's role shifts from a runtime dependency to a data-generation step. The quantum advantage is distilled into a classical model that inherits the performance while meeting industrial deployment requirements.

Looking Ahead

The surrogate concept is general. It does not depend on the current version of DQFE. Wherever a quantum processor produces features that enhance classical machine learning. The pattern is:

- Use the quantum processor to generate quantum features for a manageable dataset.

- Train a classical surrogate to replicate the mapping.

- Deploy the surrogate at scale, retaining the performance gains.

This redefines the role of quantum computers in the ML stack: not runtime components present at every inference, but training-time oracles that produce data too complex for classical methods to generate from scratch, yet structured enough for classical methods to learn from examples. As quantum hardware improves, the surrogate approach ensures each generation's gains translate directly into deployable, production-ready models.

But a surrogate is only as good as what it learns from. A surrogate trained on mediocre quantum features will reproduce mediocre results. The value of the surrogate is entirely determined by the quality of the quantum feature extraction it replaces, and that is where Kipu Quantum leads. Our DQFE technology, built on proprietary quantum algorithms and validated across domains on real quantum hardware, produces the quantum features that are worth surrogating.

Best classical surrogates start with best quantum features obtained at quantum-advantage level.

Bibliography

- Simen, Anton, et al. Digitized Counterdiabatic Quantum Feature Extraction. arXiv preprint arXiv:2510.13807, 2025.

- Zhang, Qi, et al. Quantum-enhanced satellite image classification. arXiv preprint arXiv:2602.18350, 2026.

- Simen, Anton, et al. Quenched Quantum Feature Maps. arXiv preprint arXiv:2508.20975, 2025.

- Frankle, Jonathan, et al. The Lottery Ticket Hypothesis: Finding Sparse, Trainable Neural Networks. arXiv preprint arXiv:1803.03635, 2018.

Tinkuq · AI matchmaker

Try it on your own use case

Describe your problem in plain English. Tinkuq, our AI matchmaker, guides you from your industrial use case to the right quantum approach. Surrogates, DQFE and beyond.

Ask Tinkuq about your use caseLive webinar · 13.05.2026

See it running in production

Join us for a hands-on session on integrating DQFE and surrogates into industrial pipelines. No quantum expertise required.

Register for the webinar